The overall migration process is as follows: export jobs from the open source scheduling engine through the migration assistant scheduling engine job export capability Then upload the job export package to the migration assistant, and import the mapped job into dataworks through task type mapping.

The basic process is shown below.įor different open source scheduling engines, the dataworks migration assistant will provide a related task export scheme. The migration assistant supports the migration of big data development tasks from the open source workflow scheduling engine to the dataworks system. The version of airflow that supports migration: Python > = 3.6 x airfow >=1.10.

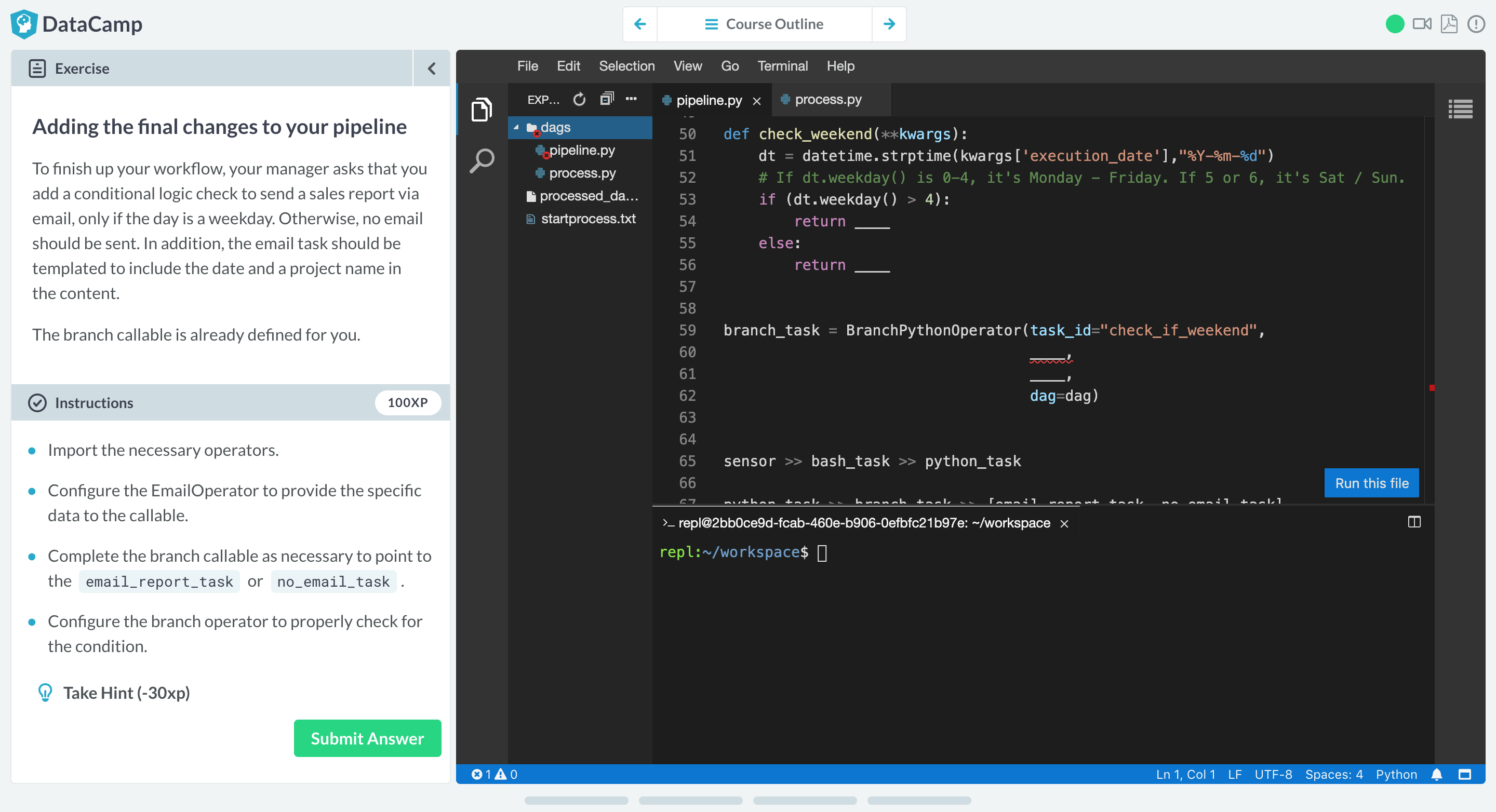

#AIRFLOW PYTHON HOW TO#

This article mainly introduces how to migrate jobs from the open source airflow workflow scheduling engine to dataworks. This article mainly introduces how to migrate jobs from the open source airflow workflow scheduling engine to dataworksĭataworks provides the function of task relocation, and supports the rapid migration of tasks from open source scheduling engines oozie, Azkaban and airflow to dataworks. Introduction:Dataworks provides the function of task relocation, and supports the rapid migration of tasks from open source scheduling engines oozie, Azkaban and airflow to dataworks.